Artificial Intelligence Midterm Exam

Artificial Intelligence A Modern Approach

Third Edition

PRENTICE HALL SERIES IN ARTIFICIAL INTELLIGENCE Stuart Russell and Peter Norvig, Editors

FORSYTH & PONCE Computer Vision: A Modern Approach GRAHAM ANSI Common Lisp JURAFSKY & MARTIN Speech and Language Processing, 2nd ed. NEAPOLITAN Learning Bayesian Networks RUSSELL & NORVIG Artificial Intelligence: A Modern Approach, 3rd ed.

Artificial Intelligence A Modern Approach

Third Edition

Stuart J. Russell and Peter Norvig

Contributing writers: Ernest Davis

Douglas D. Edwards David Forsyth

Nicholas J. Hay Jitendra M. Malik

Vibhu Mittal Mehran Sahami Sebastian Thrun

Upper Saddle River Boston Columbus San Francisco New York Indianapolis London Toronto Sydney Singapore Tokyo Montreal

Dubai Madrid Hong Kong Mexico City Munich Paris Amsterdam Cape Town

Vice President and Editorial Director, ECS: Marcia J. Horton Editor-in-Chief: Michael Hirsch Executive Editor: Tracy Dunkelberger Assistant Editor: Melinda Haggerty Editorial Assistant: Allison Michael Vice President, Production: Vince O’Brien Senior Managing Editor: Scott Disanno Production Editor: Jane Bonnell Senior Operations Supervisor: Alan Fischer Operations Specialist: Lisa McDowell Marketing Manager: Erin Davis Marketing Assistant: Mack Patterson Cover Designers: Kirsten Sims and Geoffrey Cassar Cover Images: Stan Honda/Getty, Library of Congress, NASA, National Museum of Rome,

Peter Norvig, Ian Parker, Shutterstock, Time Life/Getty Interior Designers: Stuart Russell and Peter Norvig Copy Editor: Mary Lou Nohr Art Editor: Greg Dulles Media Editor: Daniel Sandin Media Project Manager: Danielle Leone

Copyright c© 2010, 2003, 1995 by Pearson Education, Inc., Upper Saddle River, New Jersey 07458. All rights reserved. Manufactured in the United States of America. This publication is protected by Copyright and permissions should be obtained from the publisher prior to any prohibited reproduction, storage in a retrieval system, or transmission in any form or by any means, electronic, mechanical, photocopying, recording, or likewise. To obtain permission(s) to use materials from this work, please submit a written request to Pearson Higher Education, Permissions Department, 1 Lake Street, Upper Saddle River, NJ 07458.

The author and publisher of this book have used their best efforts in preparing this book. These efforts include the development, research, and testing of the theories and programs to determine their effectiveness. The author and publisher make no warranty of any kind, expressed or implied, with regard to these programs or the documentation contained in this book. The author and publisher shall not be liable in any event for incidental or consequential damages in connection with, or arising out of, the furnishing, performance, or use of these programs.

Library of Congress Cataloging-in-Publication Data on File

10 9 8 7 6 5 4 3 2 1 ISBN-13: 978-0-13-604259-4 ISBN-10: 0-13-604259-7

For Loy, Gordon, Lucy, George, and Isaac — S.J.R.

For Kris, Isabella, and Juliet — P.N.

This page intentionally left blank

Preface Artificial Intelligence (AI) is a big field, and this is a big book. We have tried to explore the full breadth of the field, which encompasses logic, probability, and continuous mathematics; perception, reasoning, learning, and action; and everything from microelectronic devices to robotic planetary explorers. The book is also big because we go into some depth.

The subtitle of this book is “A Modern Approach.” The intended meaning of this rather empty phrase is that we have tried to synthesize what is now known into a common frame- work, rather than trying to explain each subfield of AI in its own historical context. We apologize to those whose subfields are, as a result, less recognizable.

New to this edition This edition captures the changes in AI that have taken place since the last edition in 2003. There have been important applications of AI technology, such as the widespread deploy- ment of practical speech recognition, machine translation, autonomous vehicles, and house- hold robotics. There have been algorithmic landmarks, such as the solution of the game of checkers. And there has been a great deal of theoretical progress, particularly in areas such as probabilistic reasoning, machine learning, and computer vision. Most important from our point of view is the continued evolution in how we think about the field, and thus how we organize the book. The major changes are as follows:

• We place more emphasis on partially observable and nondeterministic environments, especially in the nonprobabilistic settings of search and planning. The concepts of belief state (a set of possible worlds) and state estimation (maintaining the belief state) are introduced in these settings; later in the book, we add probabilities.

• In addition to discussing the types of environments and types of agents, we now cover in more depth the types of representations that an agent can use. We distinguish among atomic representations (in which each state of the world is treated as a black box), factored representations (in which a state is a set of attribute/value pairs), and structured representations (in which the world consists of objects and relations between them).

• Our coverage of planning goes into more depth on contingent planning in partially observable environments and includes a new approach to hierarchical planning.

• We have added new material on first-order probabilistic models, including open-universe models for cases where there is uncertainty as to what objects exist.

• We have completely rewritten the introductory machine-learning chapter, stressing a wider variety of more modern learning algorithms and placing them on a firmer theo- retical footing.

• We have expanded coverage of Web search and information extraction, and of tech- niques for learning from very large data sets.

• 20% of the citations in this edition are to works published after 2003.

• We estimate that about 20% of the material is brand new. The remaining 80% reflects older work but has been largely rewritten to present a more unified picture of the field.

vii

viii Preface

Overview of the book The main unifying theme is the idea of an intelligent agent. We define AI as the study of agents that receive percepts from the environment and perform actions. Each such agent im- plements a function that maps percept sequences to actions, and we cover different ways to represent these functions, such as reactive agents, real-time planners, and decision-theoretic systems. We explain the role of learning as extending the reach of the designer into unknown environments, and we show how that role constrains agent design, favoring explicit knowl- edge representation and reasoning. We treat robotics and vision not as independently defined problems, but as occurring in the service of achieving goals. We stress the importance of the task environment in determining the appropriate agent design.

Our primary aim is to convey the ideas that have emerged over the past fifty years of AI research and the past two millennia of related work. We have tried to avoid excessive formal- ity in the presentation of these ideas while retaining precision. We have included pseudocode algorithms to make the key ideas concrete; our pseudocode is described in Appendix B.

This book is primarily intended for use in an undergraduate course or course sequence. The book has 27 chapters, each requiring about a week’s worth of lectures, so working through the whole book requires a two-semester sequence. A one-semester course can use selected chapters to suit the interests of the instructor and students. The book can also be used in a graduate-level course (perhaps with the addition of some of the primary sources suggested in the bibliographical notes). Sample syllabi are available at the book’s Web site, aima.cs.berkeley.edu. The only prerequisite is familiarity with basic concepts of computer science (algorithms, data structures, complexity) at a sophomore level. Freshman calculus and linear algebra are useful for some of the topics; the required mathematical back- ground is supplied in Appendix A.

Exercises are given at the end of each chapter. Exercises requiring significant pro- gramming are marked with a keyboard icon. These exercises can best be solved by taking advantage of the code repository at aima.cs.berkeley.edu. Some of them are large enough to be considered term projects. A number of exercises require some investigation of the literature; these are marked with a book icon.

Throughout the book, important points are marked with a pointing icon. We have in- cluded an extensive index of around 6,000 items to make it easy to find things in the book. Wherever a new term is first defined, it is also marked in the margin.NEW TERM

About the Web site aima.cs.berkeley.edu, the Web site for the book, contains

• implementations of the algorithms in the book in several programming languages, • a list of over 1000 schools that have used the book, many with links to online course

materials and syllabi, • an annotated list of over 800 links to sites around the Web with useful AI content, • a chapter-by-chapter list of supplementary material and links, • instructions on how to join a discussion group for the book,

Preface ix

• instructions on how to contact the authors with questions or comments,

• instructions on how to report errors in the book, in the likely event that some exist, and

• slides and other materials for instructors.

About the cover The cover depicts the final position from the decisive game 6 of the 1997 match between chess champion Garry Kasparov and program DEEP BLUE. Kasparov, playing Black, was forced to resign, making this the first time a computer had beaten a world champion in a chess match. Kasparov is shown at the top. To his left is the Asimo humanoid robot and to his right is Thomas Bayes (1702–1761), whose ideas about probability as a measure of belief underlie much of modern AI technology. Below that we see a Mars Exploration Rover, a robot that landed on Mars in 2004 and has been exploring the planet ever since. To the right is Alan Turing (1912–1954), whose fundamental work defined the fields of computer science in general and artificial intelligence in particular. At the bottom is Shakey (1966– 1972), the first robot to combine perception, world-modeling, planning, and learning. With Shakey is project leader Charles Rosen (1917–2002). At the bottom right is Aristotle (384 B.C.–322 B.C.), who pioneered the study of logic; his work was state of the art until the 19th century (copy of a bust by Lysippos). At the bottom left, lightly screened behind the authors’ names, is a planning algorithm by Aristotle from De Motu Animalium in the original Greek. Behind the title is a portion of the CPSC Bayesian network for medical diagnosis (Pradhan et al., 1994). Behind the chess board is part of a Bayesian logic model for detecting nuclear explosions from seismic signals.

Credits: Stan Honda/Getty (Kasparaov), Library of Congress (Bayes), NASA (Mars rover), National Museum of Rome (Aristotle), Peter Norvig (book), Ian Parker (Berkeley skyline), Shutterstock (Asimo, Chess pieces), Time Life/Getty (Shakey, Turing).

Acknowledgments This book would not have been possible without the many contributors whose names did not make it to the cover. Jitendra Malik and David Forsyth wrote Chapter 24 (computer vision) and Sebastian Thrun wrote Chapter 25 (robotics). Vibhu Mittal wrote part of Chapter 22 (natural language). Nick Hay, Mehran Sahami, and Ernest Davis wrote some of the exercises. Zoran Duric (George Mason), Thomas C. Henderson (Utah), Leon Reznik (RIT), Michael Gourley (Central Oklahoma) and Ernest Davis (NYU) reviewed the manuscript and made helpful suggestions. We thank Ernie Davis in particular for his tireless ability to read multiple drafts and help improve the book. Nick Hay whipped the bibliography into shape and on deadline stayed up to 5:30 AM writing code to make the book better. Jon Barron formatted and improved the diagrams in this edition, while Tim Huang, Mark Paskin, and Cynthia Bruyns helped with diagrams and algorithms in previous editions. Ravi Mohan and Ciaran O’Reilly wrote and maintain the Java code examples on the Web site. John Canny wrote the robotics chapter for the first edition and Douglas Edwards researched the historical notes. Tracy Dunkelberger, Allison Michael, Scott Disanno, and Jane Bonnell at Pearson tried their best to keep us on schedule and made many helpful suggestions. Most helpful of all has

x Preface

been Julie Sussman, P.P.A., who read every chapter and provided extensive improvements. In previous editions we had proofreaders who would tell us when we left out a comma and said which when we meant that; Julie told us when we left out a minus sign and said xi when we meant xj . For every typo or confusing explanation that remains in the book, rest assured that Julie has fixed at least five. She persevered even when a power failure forced her to work by lantern light rather than LCD glow.

Stuart would like to thank his parents for their support and encouragement and his wife, Loy Sheflott, for her endless patience and boundless wisdom. He hopes that Gordon, Lucy, George, and Isaac will soon be reading this book after they have forgiven him for working so long on it. RUGS (Russell’s Unusual Group of Students) have been unusually helpful, as always.

Peter would like to thank his parents (Torsten and Gerda) for getting him started, and his wife (Kris), children (Bella and Juliet), colleagues, and friends for encouraging and tolerating him through the long hours of writing and longer hours of rewriting.

We both thank the librarians at Berkeley, Stanford, and NASA and the developers of CiteSeer, Wikipedia, and Google, who have revolutionized the way we do research. We can’t acknowledge all the people who have used the book and made suggestions, but we would like to note the especially helpful comments of Gagan Aggarwal, Eyal Amir, Ion Androutsopou- los, Krzysztof Apt, Warren Haley Armstrong, Ellery Aziel, Jeff Van Baalen, Darius Bacon, Brian Baker, Shumeet Baluja, Don Barker, Tony Barrett, James Newton Bass, Don Beal, Howard Beck, Wolfgang Bibel, John Binder, Larry Bookman, David R. Boxall, Ronen Braf- man, John Bresina, Gerhard Brewka, Selmer Bringsjord, Carla Brodley, Chris Brown, Emma Brunskill, Wilhelm Burger, Lauren Burka, Carlos Bustamante, Joao Cachopo, Murray Camp- bell, Norman Carver, Emmanuel Castro, Anil Chakravarthy, Dan Chisarick, Berthe Choueiry, Roberto Cipolla, David Cohen, James Coleman, Julie Ann Comparini, Corinna Cortes, Gary Cottrell, Ernest Davis, Tom Dean, Rina Dechter, Tom Dietterich, Peter Drake, Chuck Dyer, Doug Edwards, Robert Egginton, Asma’a El-Budrawy, Barbara Engelhardt, Kutluhan Erol, Oren Etzioni, Hana Filip, Douglas Fisher, Jeffrey Forbes, Ken Ford, Eric Fosler-Lussier, John Fosler, Jeremy Frank, Alex Franz, Bob Futrelle, Marek Galecki, Stefan Gerberding, Stuart Gill, Sabine Glesner, Seth Golub, Gosta Grahne, Russ Greiner, Eric Grimson, Bar- bara Grosz, Larry Hall, Steve Hanks, Othar Hansson, Ernst Heinz, Jim Hendler, Christoph Herrmann, Paul Hilfinger, Robert Holte, Vasant Honavar, Tim Huang, Seth Hutchinson, Joost Jacob, Mark Jelasity, Magnus Johansson, Istvan Jonyer, Dan Jurafsky, Leslie Kaelbling, Keiji Kanazawa, Surekha Kasibhatla, Simon Kasif, Henry Kautz, Gernot Kerschbaumer, Max Khesin, Richard Kirby, Dan Klein, Kevin Knight, Roland Koenig, Sven Koenig, Daphne Koller, Rich Korf, Benjamin Kuipers, James Kurien, John Lafferty, John Laird, Gus Lars- son, John Lazzaro, Jon LeBlanc, Jason Leatherman, Frank Lee, Jon Lehto, Edward Lim, Phil Long, Pierre Louveaux, Don Loveland, Sridhar Mahadevan, Tony Mancill, Jim Martin, Andy Mayer, John McCarthy, David McGrane, Jay Mendelsohn, Risto Miikkulanien, Brian Milch, Steve Minton, Vibhu Mittal, Mehryar Mohri, Leora Morgenstern, Stephen Muggleton, Kevin Murphy, Ron Musick, Sung Myaeng, Eric Nadeau, Lee Naish, Pandu Nayak, Bernhard Nebel, Stuart Nelson, XuanLong Nguyen, Nils Nilsson, Illah Nourbakhsh, Ali Nouri, Arthur Nunes-Harwitt, Steve Omohundro, David Page, David Palmer, David Parkes, Ron Parr, Mark

Preface xi

Paskin, Tony Passera, Amit Patel, Michael Pazzani, Fernando Pereira, Joseph Perla, Wim Pi- jls, Ira Pohl, Martha Pollack, David Poole, Bruce Porter, Malcolm Pradhan, Bill Pringle, Lor- raine Prior, Greg Provan, William Rapaport, Deepak Ravichandran, Ioannis Refanidis, Philip Resnik, Francesca Rossi, Sam Roweis, Richard Russell, Jonathan Schaeffer, Richard Scherl, Hinrich Schuetze, Lars Schuster, Bart Selman, Soheil Shams, Stuart Shapiro, Jude Shav- lik, Yoram Singer, Satinder Singh, Daniel Sleator, David Smith, Bryan So, Robert Sproull, Lynn Stein, Larry Stephens, Andreas Stolcke, Paul Stradling, Devika Subramanian, Marek Suchenek, Rich Sutton, Jonathan Tash, Austin Tate, Bas Terwijn, Olivier Teytaud, Michael Thielscher, William Thompson, Sebastian Thrun, Eric Tiedemann, Mark Torrance, Randall Upham, Paul Utgoff, Peter van Beek, Hal Varian, Paulina Varshavskaya, Sunil Vemuri, Vandi Verma, Ubbo Visser, Jim Waldo, Toby Walsh, Bonnie Webber, Dan Weld, Michael Wellman, Kamin Whitehouse, Michael Dean White, Brian Williams, David Wolfe, Jason Wolfe, Bill Woods, Alden Wright, Jay Yagnik, Mark Yasuda, Richard Yen, Eliezer Yudkowsky, Weixiong Zhang, Ming Zhao, Shlomo Zilberstein, and our esteemed colleague Anonymous Reviewer.

About the Authors Stuart Russell was born in 1962 in Portsmouth, England. He received his B.A. with first- class honours in physics from Oxford University in 1982, and his Ph.D. in computer science from Stanford in 1986. He then joined the faculty of the University of California at Berkeley, where he is a professor of computer science, director of the Center for Intelligent Systems, and holder of the Smith–Zadeh Chair in Engineering. In 1990, he received the Presidential Young Investigator Award of the National Science Foundation, and in 1995 he was cowinner of the Computers and Thought Award. He was a 1996 Miller Professor of the University of California and was appointed to a Chancellor’s Professorship in 2000. In 1998, he gave the Forsythe Memorial Lectures at Stanford University. He is a Fellow and former Executive Council member of the American Association for Artificial Intelligence. He has published over 100 papers on a wide range of topics in artificial intelligence. His other books include The Use of Knowledge in Analogy and Induction and (with Eric Wefald) Do the Right Thing: Studies in Limited Rationality.

Peter Norvig is currently Director of Research at Google, Inc., and was the director respon- sible for the core Web search algorithms from 2002 to 2005. He is a Fellow of the American Association for Artificial Intelligence and the Association for Computing Machinery. Previ- ously, he was head of the Computational Sciences Division at NASA Ames Research Center, where he oversaw NASA’s research and development in artificial intelligence and robotics, and chief scientist at Junglee, where he helped develop one of the first Internet information extraction services. He received a B.S. in applied mathematics from Brown University and a Ph.D. in computer science from the University of California at Berkeley. He received the Distinguished Alumni and Engineering Innovation awards from Berkeley and the Exceptional Achievement Medal from NASA. He has been a professor at the University of Southern Cal- ifornia and a research faculty member at Berkeley. His other books are Paradigms of AI Programming: Case Studies in Common Lisp and Verbmobil: A Translation System for Face- to-Face Dialog and Intelligent Help Systems for UNIX.

xii

Contents

I Artificial Intelligence

1 Introduction 1 1.1 What Is AI? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1 1.2 The Foundations of Artificial Intelligence . . . . . . . . . . . . . . . . . . 5 1.3 The History of Artificial Intelligence . . . . . . . . . . . . . . . . . . . . 16 1.4 The State of the Art . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28 1.5 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 29

2 Intelligent Agents 34 2.1 Agents and Environments . . . . . . . . . . . . . . . . . . . . . . . . . . 34 2.2 Good Behavior: The Concept of Rationality . . . . . . . . . . . . . . . . 36 2.3 The Nature of Environments . . . . . . . . . . . . . . . . . . . . . . . . . 40 2.4 The Structure of Agents . . . . . . . . . . . . . . . . . . . . . . . . . . . 46 2.5 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 59

II Problem-solving

3 Solving Problems by Searching 64 3.1 Problem-Solving Agents . . . . . . . . . . . . . . . . . . . . . . . . . . . 64 3.2 Example Problems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69 3.3 Searching for Solutions . . . . . . . . . . . . . . . . . . . . . . . . . . . 75 3.4 Uninformed Search Strategies . . . . . . . . . . . . . . . . . . . . . . . . 81 3.5 Informed (Heuristic) Search Strategies . . . . . . . . . . . . . . . . . . . 92 3.6 Heuristic Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 102 3.7 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 108

4 Beyond Classical Search 120 4.1 Local Search Algorithms and Optimization Problems . . . . . . . . . . . 120 4.2 Local Search in Continuous Spaces . . . . . . . . . . . . . . . . . . . . . 129 4.3 Searching with Nondeterministic Actions . . . . . . . . . . . . . . . . . . 133 4.4 Searching with Partial Observations . . . . . . . . . . . . . . . . . . . . . 138 4.5 Online Search Agents and Unknown Environments . . . . . . . . . . . . 147 4.6 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 153

5 Adversarial Search 161 5.1 Games . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 161 5.2 Optimal Decisions in Games . . . . . . . . . . . . . . . . . . . . . . . . 163 5.3 Alpha–Beta Pruning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167 5.4 Imperfect Real-Time Decisions . . . . . . . . . . . . . . . . . . . . . . . 171 5.5 Stochastic Games . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 177

xiii

xiv Contents

5.6 Partially Observable Games . . . . . . . . . . . . . . . . . . . . . . . . . 180 5.7 State-of-the-Art Game Programs . . . . . . . . . . . . . . . . . . . . . . 185 5.8 Alternative Approaches . . . . . . . . . . . . . . . . . . . . . . . . . . . 187 5.9 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 189

6 Constraint Satisfaction Problems 202 6.1 Defining Constraint Satisfaction Problems . . . . . . . . . . . . . . . . . 202 6.2 Constraint Propagation: Inference in CSPs . . . . . . . . . . . . . . . . . 208 6.3 Backtracking Search for CSPs . . . . . . . . . . . . . . . . . . . . . . . . 214 6.4 Local Search for CSPs . . . . . . . . . . . . . . . . . . . . . . . . . . . . 220 6.5 The Structure of Problems . . . . . . . . . . . . . . . . . . . . . . . . . . 222 6.6 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 227

III Knowledge, reasoning, and planning

7 Logical Agents 234 7.1 Knowledge-Based Agents . . . . . . . . . . . . . . . . . . . . . . . . . . 235 7.2 The Wumpus World . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 236 7.3 Logic . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 240 7.4 Propositional Logic: A Very Simple Logic . . . . . . . . . . . . . . . . . 243 7.5 Propositional Theorem Proving . . . . . . . . . . . . . . . . . . . . . . . 249 7.6 Effective Propositional Model Checking . . . . . . . . . . . . . . . . . . 259 7.7 Agents Based on Propositional Logic . . . . . . . . . . . . . . . . . . . . 265 7.8 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 274

8 First-Order Logic 285 8.1 Representation Revisited . . . . . . . . . . . . . . . . . . . . . . . . . . 285 8.2 Syntax and Semantics of First-Order Logic . . . . . . . . . . . . . . . . . 290 8.3 Using First-Order Logic . . . . . . . . . . . . . . . . . . . . . . . . . . . 300 8.4 Knowledge Engineering in First-Order Logic . . . . . . . . . . . . . . . . 307 8.5 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 313

9 Inference in First-Order Logic 322 9.1 Propositional vs. First-Order Inference . . . . . . . . . . . . . . . . . . . 322 9.2 Unification and Lifting . . . . . . . . . . . . . . . . . . . . . . . . . . . 325 9.3 Forward Chaining . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 330 9.4 Backward Chaining . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 337 9.5 Resolution . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 345 9.6 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 357

10 Classical Planning 366 10.1 Definition of Classical Planning . . . . . . . . . . . . . . . . . . . . . . . 366 10.2 Algorithms for Planning as State-Space Search . . . . . . . . . . . . . . . 373 10.3 Planning Graphs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 379

Contents xv

10.4 Other Classical Planning Approaches . . . . . . . . . . . . . . . . . . . . 387 10.5 Analysis of Planning Approaches . . . . . . . . . . . . . . . . . . . . . . 392 10.6 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 393

11 Planning and Acting in the Real World 401 11.1 Time, Schedules, and Resources . . . . . . . . . . . . . . . . . . . . . . . 401 11.2 Hierarchical Planning . . . . . . . . . . . . . . . . . . . . . . . . . . . . 406 11.3 Planning and Acting in Nondeterministic Domains . . . . . . . . . . . . . 415 11.4 Multiagent Planning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 425 11.5 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 430

12 Knowledge Representation 437 12.1 Ontological Engineering . . . . . . . . . . . . . . . . . . . . . . . . . . . 437 12.2 Categories and Objects . . . . . . . . . . . . . . . . . . . . . . . . . . . 440 12.3 Events . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 446 12.4 Mental Events and Mental Objects . . . . . . . . . . . . . . . . . . . . . 450 12.5 Reasoning Systems for Categories . . . . . . . . . . . . . . . . . . . . . 453 12.6 Reasoning with Default Information . . . . . . . . . . . . . . . . . . . . 458 12.7 The Internet Shopping World . . . . . . . . . . . . . . . . . . . . . . . . 462 12.8 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 467

IV Uncertain knowledge and reasoning

13 Quantifying Uncertainty 480 13.1 Acting under Uncertainty . . . . . . . . . . . . . . . . . . . . . . . . . . 480 13.2 Basic Probability Notation . . . . . . . . . . . . . . . . . . . . . . . . . . 483 13.3 Inference Using Full Joint Distributions . . . . . . . . . . . . . . . . . . . 490 13.4 Independence . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 494 13.5 Bayes’ Rule and Its Use . . . . . . . . . . . . . . . . . . . . . . . . . . . 495 13.6 The Wumpus World Revisited . . . . . . . . . . . . . . . . . . . . . . . . 499 13.7 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 503

14 Probabilistic Reasoning 510 14.1 Representing Knowledge in an Uncertain Domain . . . . . . . . . . . . . 510 14.2 The Semantics of Bayesian Networks . . . . . . . . . . . . . . . . . . . . 513 14.3 Efficient Representation of Conditional Distributions . . . . . . . . . . . . 518 14.4 Exact Inference in Bayesian Networks . . . . . . . . . . . . . . . . . . . 522 14.5 Approximate Inference in Bayesian Networks . . . . . . . . . . . . . . . 530 14.6 Relational and First-Order Probability Models . . . . . . . . . . . . . . . 539 14.7 Other Approaches to Uncertain Reasoning . . . . . . . . . . . . . . . . . 546 14.8 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 551

15 Probabilistic Reasoning over Time 566 15.1 Time and Uncertainty . . . . . . . . . . . . . . . . . . . . . . . . . . . . 566

xvi Contents

15.2 Inference in Temporal Models . . . . . . . . . . . . . . . . . . . . . . . . 570 15.3 Hidden Markov Models . . . . . . . . . . . . . . . . . . . . . . . . . . . 578 15.4 Kalman Filters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 584 15.5 Dynamic Bayesian Networks . . . . . . . . . . . . . . . . . . . . . . . . 590 15.6 Keeping Track of Many Objects . . . . . . . . . . . . . . . . . . . . . . . 599 15.7 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 603

16 Making Simple Decisions 610 16.1 Combining Beliefs and Desires under Uncertainty . . . . . . . . . . . . . 610 16.2 The Basis of Utility Theory . . . . . . . . . . . . . . . . . . . . . . . . . 611 16.3 Utility Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 615 16.4 Multiattribute Utility Functions . . . . . . . . . . . . . . . . . . . . . . . 622 16.5 Decision Networks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 626 16.6 The Value of Information . . . . . . . . . . . . . . . . . . . . . . . . . . 628 16.7 Decision-Theoretic Expert Systems . . . . . . . . . . . . . . . . . . . . . 633 16.8 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 636

17 Making Complex Decisions 645 17.1 Sequential Decision Problems . . . . . . . . . . . . . . . . . . . . . . . . 645 17.2 Value Iteration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 652 17.3 Policy Iteration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 656 17.4 Partially Observable MDPs . . . . . . . . . . . . . . . . . . . . . . . . . 658 17.5 Decisions with Multiple Agents: Game Theory . . . . . . . . . . . . . . . 666 17.6 Mechanism Design . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 679 17.7 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 684

V Learning

18 Learning from Examples 693 18.1 Forms of Learning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 693 18.2 Supervised Learning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 695 18.3 Learning Decision Trees . . . . . . . . . . . . . . . . . . . . . . . . . . . 697 18.4 Evaluating and Choosing the Best Hypothesis . . . . . . . . . . . . . . . 708 18.5 The Theory of Learning . . . . . . . . . . . . . . . . . . . . . . . . . . . 713 18.6 Regression and Classification with Linear Models . . . . . . . . . . . . . 717 18.7 Artificial Neural Networks . . . . . . . . . . . . . . . . . . . . . . . . . 727 18.8 Nonparametric Models . . . . . . . . . . . . . . . . . . . . . . . . . . . 737 18.9 Support Vector Machines . . . . . . . . . . . . . . . . . . . . . . . . . . 744 18.10 Ensemble Learning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 748 18.11 Practical Machine Learning . . . . . . . . . . . . . . . . . . . . . . . . . 753 18.12 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 757

19 Knowledge in Learning 768 19.1 A Logical Formulation of Learning . . . . . . . . . . . . . . . . . . . . . 768

Contents xvii

19.2 Knowledge in Learning . . . . . . . . . . . . . . . . . . . . . . . . . . . 777 19.3 Explanation-Based Learning . . . . . . . . . . . . . . . . . . . . . . . . 780 19.4 Learning Using Relevance Information . . . . . . . . . . . . . . . . . . . 784 19.5 Inductive Logic Programming . . . . . . . . . . . . . . . . . . . . . . . . 788 19.6 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 797

20 Learning Probabilistic Models 802 20.1 Statistical Learning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 802 20.2 Learning with Complete Data . . . . . . . . . . . . . . . . . . . . . . . . 806 20.3 Learning with Hidden Variables: The EM Algorithm . . . . . . . . . . . . 816 20.4 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 825

21 Reinforcement Learning 830 21.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 830 21.2 Passive Reinforcement Learning . . . . . . . . . . . . . . . . . . . . . . 832 21.3 Active Reinforcement Learning . . . . . . . . . . . . . . . . . . . . . . . 839 21.4 Generalization in Reinforcement Learning . . . . . . . . . . . . . . . . . 845 21.5 Policy Search . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 848 21.6 Applications of Reinforcement Learning . . . . . . . . . . . . . . . . . . 850 21.7 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 853

VI Communicating, perceiving, and acting

22 Natural Language Processing 860 22.1 Language Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 860 22.2 Text Classification . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 865 22.3 Information Retrieval . . . . . . . . . . . . . . . . . . . . . . . . . . . . 867 22.4 Information Extraction . . . . . . . . . . . . . . . . . . . . . . . . . . . . 873 22.5 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 882

23 Natural Language for Communication 888 23.1 Phrase Structure Grammars . . . . . . . . . . . . . . . . . . . . . . . . . 888 23.2 Syntactic Analysis (Parsing) . . . . . . . . . . . . . . . . . . . . . . . . . 892 23.3 Augmented Grammars and Semantic Interpretation . . . . . . . . . . . . 897 23.4 Machine Translation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 907 23.5 Speech Recognition . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 912 23.6 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 918

24 Perception 928 24.1 Image Formation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 929 24.2 Early Image-Processing Operations . . . . . . . . . . . . . . . . . . . . . 935 24.3 Object Recognition by Appearance . . . . . . . . . . . . . . . . . . . . . 942 24.4 Reconstructing the 3D World . . . . . . . . . . . . . . . . . . . . . . . . 947 24.5 Object Recognition from Structural Information . . . . . . . . . . . . . . 957

xviii Contents

24.6 Using Vision . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 961 24.7 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 965

25 Robotics 971 25.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 971 25.2 Robot Hardware . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 973 25.3 Robotic Perception . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 978 25.4 Planning to Move . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 986 25.5 Planning Uncertain Movements . . . . . . . . . . . . . . . . . . . . . . . 993 25.6 Moving . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 997 25.7 Robotic Software Architectures . . . . . . . . . . . . . . . . . . . . . . . 1003 25.8 Application Domains . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1006 25.9 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 1010

VII Conclusions

26 Philosophical Foundations 1020 26.1 Weak AI: Can Machines Act Intelligently? . . . . . . . . . . . . . . . . . 1020 26.2 Strong AI: Can Machines Really Think? . . . . . . . . . . . . . . . . . . 1026 26.3 The Ethics and Risks of Developing Artificial Intelligence . . . . . . . . . 1034 26.4 Summary, Bibliographical and Historical Notes, Exercises . . . . . . . . . 1040

27 AI: The Present and Future 1044 27.1 Agent Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1044 27.2 Agent Architectures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1047 27.3 Are We Going in the Right Direction? . . . . . . . . . . . . . . . . . . . 1049 27.4 What If AI Does Succeed? . . . . . . . . . . . . . . . . . . . . . . . . . 1051

A Mathematical background 1053 A.1 Complexity Analysis and O() Notation . . . . . . . . . . . . . . . . . . . 1053 A.2 Vectors, Matrices, and Linear Algebra . . . . . . . . . . . . . . . . . . . 1055 A.3 Probability Distributions . . . . . . . . . . . . . . . . . . . . . . . . . . . 1057

B Notes on Languages and Algorithms 1060 B.1 Defining Languages with Backus–Naur Form (BNF) . . . . . . . . . . . . 1060 B.2 Describing Algorithms with Pseudocode . . . . . . . . . . . . . . . . . . 1061 B.3 Online Help . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1062

Bibliography 1063

Index 1095

1 INTRODUCTION

In which we try to explain why we consider artificial intelligence to be a subject most worthy of study, and in which we try to decide what exactly it is, this being a good thing to decide before embarking.

We call ourselves Homo sapiens—man the wise—because our intelligence is so importantINTELLIGENCE to us. For thousands of years, we have tried to understand how we think; that is, how a mere handful of matter can perceive, understand, predict, and manipulate a world far larger and more complicated than itself. The field of artificial intelligence, or AI, goes further still: itARTIFICIALINTELLIGENCE attempts not just to understand but also to build intelligent entities.

AI is one of the newest fields in science and engineering. Work started in earnest soon after World War II, and the name itself was coined in 1956. Along with molecular biology, AI is regularly cited as the “field I would most like to be in” by scientists in other disciplines. A student in physics might reasonably feel that all the good ideas have already been taken by Galileo, Newton, Einstein, and the rest. AI, on the other hand, still has openings for several full-time Einsteins and Edisons.

AI currently encompasses a huge variety of subfields, ranging from the general (learning and perception) to the specific, such as playing chess, proving mathematical theorems, writing poetry, driving a car on a crowded street, and diagnosing diseases. AI is relevant to any intellectual task; it is truly a universal field.

1.1 WHAT IS AI?

We have claimed that AI is exciting, but we have not said what it is. In Figure 1.1 we see eight definitions of AI, laid out along two dimensions. The definitions on top are concerned with thought processes and reasoning, whereas the ones on the bottom address behavior. The definitions on the left measure success in terms of fidelity to human performance, whereas the ones on the right measure against an ideal performance measure, called rationality. ARATIONALITY system is rational if it does the “right thing,” given what it knows.

Historically, all four approaches to AI have been followed, each by different people with different methods. A human-centered approach must be in part an empirical science, in-

1

2 Chapter 1. Introduction

Thinking Humanly Thinking Rationally

“The exciting new effort to make comput- ers think . . . machines with minds, in the full and literal sense.” (Haugeland, 1985)

“The study of mental faculties through the use of computational models.” (Charniak and McDermott, 1985)

“[The automation of] activities that we associate with human thinking, activities such as decision-making, problem solv- ing, learning . . .” (Bellman, 1978)

“The study of the computations that make it possible to perceive, reason, and act.” (Winston, 1992)

Acting Humanly Acting Rationally

“The art of creating machines that per- form functions that require intelligence when performed by people.” (Kurzweil, 1990)

“Computational Intelligence is the study of the design of intelligent agents.” (Poole et al., 1998)

“The study of how to make computers do things at which, at the moment, people are better.” (Rich and Knight, 1991)

“AI . . . is concerned with intelligent be- havior in artifacts.” (Nilsson, 1998)

Figure 1.1 Some definitions of artificial intelligence, organized into four categories.

volving observations and hypotheses about human behavior. A rationalist1 approach involves a combination of mathematics and engineering. The various group have both disparaged and helped each other. Let us look at the four approaches in more detail.

1.1.1 Acting humanly: The Turing Test approach

The Turing Test, proposed by Alan Turing (1950), was designed to provide a satisfactoryTURING TEST operational definition of intelligence. A computer passes the test if a human interrogator, after posing some written questions, cannot tell whether the written responses come from a person or from a computer. Chapter 26 discusses the details of the test and whether a computer would really be intelligent if it passed. For now, we note that programming a computer to pass a rigorously applied test provides plenty to work on. The computer would need to possess the following capabilities:

• natural language processing to enable it to communicate successfully in English;NATURAL LANGUAGEPROCESSING • knowledge representation to store what it knows or hears;KNOWLEDGEREPRESENTATION • automated reasoning to use the stored information to answer questions and to drawAUTOMATEDREASONING

new conclusions;

• machine learning to adapt to new circumstances and to detect and extrapolate patterns.MACHINE LEARNING

1 By distinguishing between human and rational behavior, we are not suggesting that humans are necessarily “irrational” in the sense of “emotionally unstable” or “insane.” One merely need note that we are not perfect: not all chess players are grandmasters; and, unfortunately, not everyone gets an A on the exam. Some systematic errors in human reasoning are cataloged by Kahneman et al. (1982).

Section 1.1. What Is AI? 3

Turing’s test deliberately avoided direct physical interaction between the interrogator and the computer, because physical simulation of a person is unnecessary for intelligence. However, the so-called total Turing Test includes a video signal so that the interrogator can test theTOTAL TURING TEST subject’s perceptual abilities, as well as the opportunity for the interrogator to pass physical objects “through the hatch.” To pass the total Turing Test, the computer will need

• computer vision to perceive objects, andCOMPUTER VISION

• robotics to manipulate objects and move about.ROBOTICS

These six disciplines compose most of AI, and Turing deserves credit for designing a test that remains relevant 60 years later. Yet AI researchers have devoted little effort to passing the Turing Test, believing that it is more important to study the underlying principles of in- telligence than to duplicate an exemplar. The quest for “artificial flight” succeeded when the Wright brothers and others stopped imitating birds and started using wind tunnels and learn- ing about aerodynamics. Aeronautical engineering texts do not define the goal of their field as making “machines that fly so exactly like pigeons that they can fool even other pigeons.”

1.1.2 Thinking humanly: The cognitive modeling approach

If we are going to say that a given program thinks like a human, we must have some way of determining how humans think. We need to get inside the actual workings of human minds. There are three ways to do this: through introspection—trying to catch our own thoughts as they go by; through psychological experiments—observing a person in action; and through brain imaging—observing the brain in action. Once we have a sufficiently precise theory of the mind, it becomes possible to express the theory as a computer program. If the program’s input–output behavior matches corresponding human behavior, that is evidence that some of the program’s mechanisms could also be operating in humans. For example, Allen Newell and Herbert Simon, who developed GPS, the “General Problem Solver” (Newell and Simon, 1961), were not content merely to have their program solve problems correctly. They were more concerned with comparing the trace of its reasoning steps to traces of human subjects solving the same problems. The interdisciplinary field of cognitive science brings togetherCOGNITIVE SCIENCE computer models from AI and experimental techniques from psychology to construct precise and testable theories of the human mind.

Cognitive science is a fascinating field in itself, worthy of several textbooks and at least one encyclopedia (Wilson and Keil, 1999). We will occasionally comment on similarities or differences between AI techniques and human cognition. Real cognitive science, however, is necessarily based on experimental investigation of actual humans or animals. We will leave that for other books, as we assume the reader has only a computer for experimentation.

In the early days of AI there was often confusion between the approaches: an author would argue that an algorithm performs well on a task and that it is therefore a good model of human performance, or vice versa. Modern authors separate the two kinds of claims; this distinction has allowed both AI and cognitive science to develop more rapidly. The two fields continue to fertilize each other, most notably in computer vision, which incorporates neurophysiological evidence into computational models.

4 Chapter 1. Introduction

1.1.3 Thinking rationally: The “laws of thought” approach

The Greek philosopher Aristotle was one of the first to attempt to codify “right thinking,” that is, irrefutable reasoning processes. His syllogisms provided patterns for argument structuresSYLLOGISM that always yielded correct conclusions when given correct premises—for example, “Socrates is a man; all men are mortal; therefore, Socrates is mortal.” These laws of thought were supposed to govern the operation of the mind; their study initiated the field called logic.LOGIC

Logicians in the 19th century developed a precise notation for statements about all kinds of objects in the world and the relations among them. (Contrast this with ordinary arithmetic notation, which provides only for statements about numbers.) By 1965, programs existed that could, in principle, solve any solvable problem described in logical notation. (Although if no solution exists, the program might loop forever.) The so-called logicist tradition withinLOGICIST artificial intelligence hopes to build on such programs to create intelligent systems.

There are two main obstacles to this approach. First, it is not easy to take informal knowledge and state it in the formal terms required by logical notation, particularly when the knowledge is less than 100% certain. Second, there is a big difference between solving a problem “in principle” and solving it in practice. Even problems with just a few hundred facts can exhaust the computational resources of any computer unless it has some guidance as to which reasoning steps to try first. Although both of these obstacles apply to any attempt to build computational reasoning systems, they appeared first in the logicist tradition.

1.1.4 Acting rationally: The rational agent approach

An agent is just something that acts (agent comes from the Latin agere, to do). Of course,AGENT all computer programs do something, but computer agents are expected to do more: operate autonomously, perceive their environment, persist over a prolonged time period, adapt to change, and create and pursue goals. A rational agent is one that acts so as to achieve theRATIONAL AGENT best outcome or, when there is uncertainty, the best expected outcome.

In the “laws of thought” approach to AI, the emphasis was on correct inferences. Mak- ing correct inferences is sometimes part of being a rational agent, because one way to act rationally is to reason logically to the conclusion that a given action will achieve one’s goals and then to act on that conclusion. On the other hand, correct inference is not all of ration- ality; in some situations, there is no provably correct thing to do, but something must still be done. There are also ways of acting rationally that cannot be said to involve inference. For example, recoiling from a hot stove is a reflex action that is usually more successful than a slower action taken after careful deliberation.

All the skills needed for the Turing Test also allow an agent to act rationally. Knowledge representation and reasoning enable agents to reach good decisions. We need to be able to generate comprehensible sentences in natural language to get by in a complex society. We need learning not only for erudition, but also because it improves our ability to generate effective behavior.

The rational-agent approach has two advantages over the other approaches. First, it is more general than the “laws of thought” approach because correct inference is just one of several possible mechanisms for achieving rationality. Second, it is more amenable to

Section 1.2. The Foundations of Artificial Intelligence 5

scientific development than are approaches based on human behavior or human thought. The standard of rationality is mathematically well defined and completely general, and can be “unpacked” to generate agent designs that provably achieve it. Human behavior, on the other hand, is well adapted for one specific environment and is defined by, well, the sum total of all the things that humans do. This book therefore concentrates on general principles of rational agents and on components for constructing them. We will see that despite the apparent simplicity with which the problem can be stated, an enormous variety of issues come up when we try to solve it. Chapter 2 outlines some of these issues in more detail.

One important point to keep in mind: We will see before too long that achieving perfect rationality—always doing the right thing—is not feasible in complicated environments. The computational demands are just too high. For most of the book, however, we will adopt the working hypothesis that perfect rationality is a good starting point for analysis. It simplifies the problem and provides the appropriate setting for most of the foundational material in the field. Chapters 5 and 17 deal explicitly with the issue of limited rationality—actingLIMITEDRATIONALITY appropriately when there is not enough time to do all the computations one might like.

1.2 THE FOUNDATIONS OF ARTIFICIAL INTELLIGENCE

In this section, we provide a brief history of the disciplines that contributed ideas, viewpoints, and techniques to AI. Like any history, this one is forced to concentrate on a small number of people, events, and ideas and to ignore others that also were important. We organize the history around a series of questions. We certainly would not wish to give the impression that these questions are the only ones the disciplines address or that the disciplines have all been working toward AI as their ultimate fruition.

1.2.1 Philosophy

• Can formal rules be used to draw valid conclusions? • How does the mind arise from a physical brain? • Where does knowledge come from? • How does knowledge lead to action?

Aristotle (384–322 B.C.), whose bust appears on the front cover of this book, was the first to formulate a precise set of laws governing the rational part of the mind. He developed an informal system of syllogisms for proper reasoning, which in principle allowed one to gener- ate conclusions mechanically, given initial premises. Much later, Ramon Lull (d. 1315) had the idea that useful reasoning could actually be carried out by a mechanical artifact. Thomas Hobbes (1588–1679) proposed that reasoning was like numerical computation, that “we add and subtract in our silent thoughts.” The automation of computation itself was already well under way. Around 1500, Leonardo da Vinci (1452–1519) designed but did not build a me- chanical calculator; recent reconstructions have shown the design to be functional. The first known calculating machine was constructed around 1623 by the German scientist Wilhelm Schickard (1592–1635), although the Pascaline, built in 1642 by Blaise Pascal (1623–1662),

6 Chapter 1. Introduction

is more famous. Pascal wrote that “the arithmetical machine produces effects which appear nearer to thought than all the actions of animals.” Gottfried Wilhelm Leibniz (1646–1716) built a mechanical device intended to carry out operations on concepts rather than numbers, but its scope was rather limited. Leibniz did surpass Pascal by building a calculator that could add, subtract, multiply, and take roots, whereas the Pascaline could only add and sub- tract. Some speculated that machines might not just do calculations but actually be able to think and act on their own. In his 1651 book Leviathan, Thomas Hobbes suggested the idea of an “artificial animal,” arguing “For what is the heart but a spring; and the nerves, but so many strings; and the joints, but so many wheels.”

It’s one thing to say that the mind operates, at least in part, according to logical rules, and to build physical systems that emulate some of those rules; it’s another to say that the mind itself is such a physical system. René Descartes (1596–1650) gave the first clear discussion of the distinction between mind and matter and of the problems that arise. One problem with a purely physical conception of the mind is that it seems to leave little room for free will: if the mind is governed entirely by physical laws, then it has no more free will than a rock “deciding” to fall toward the center of the earth. Descartes was a strong advocate of the power of reasoning in understanding the world, a philosophy now called rationalism, and one thatRATIONALISM counts Aristotle and Leibnitz as members. But Descartes was also a proponent of dualism.DUALISM He held that there is a part of the human mind (or soul or spirit) that is outside of nature, exempt from physical laws. Animals, on the other hand, did not possess this dual quality; they could be treated as machines. An alternative to dualism is materialism, which holdsMATERIALISM that the brain’s operation according to the laws of physics constitutes the mind. Free will is simply the way that the perception of available choices appears to the choosing entity.

Given a physical mind that manipulates knowledge, the next problem is to establish the source of knowledge. The empiricism movement, starting with Francis Bacon’s (1561–EMPIRICISM 1626) Novum Organum,2 is characterized by a dictum of John Locke (1632–1704): “Nothing is in the understanding, which was not first in the senses.” David Hume’s (1711–1776) A Treatise of Human Nature (Hume, 1739) proposed what is now known as the principle of induction: that general rules are acquired by exposure to repeated associations between theirINDUCTION elements. Building on the work of Ludwig Wittgenstein (1889–1951) and Bertrand Russell (1872–1970), the famous Vienna Circle, led by Rudolf Carnap (1891–1970), developed the doctrine of logical positivism. This doctrine holds that all knowledge can be characterized byLOGICAL POSITIVISM logical theories connected, ultimately, to observation sentences that correspond to sensoryOBSERVATIONSENTENCES inputs; thus logical positivism combines rationalism and empiricism.3 The confirmation the- ory of Carnap and Carl Hempel (1905–1997) attempted to analyze the acquisition of knowl-CONFIRMATIONTHEORY edge from experience. Carnap’s book The Logical Structure of the World (1928) defined an explicit computational procedure for extracting knowledge from elementary experiences. It was probably the first theory of mind as a computational process.

2 The Novum Organum is an update of Aristotle’s Organon, or instrument of thought. Thus Aristotle can be seen as both an empiricist and a rationalist. 3 In this picture, all meaningful statements can be verified or falsified either by experimentation or by analysis of the meaning of the words. Because this rules out most of metaphysics, as was the intention, logical positivism was unpopular in some circles.

Section 1.2. The Foundations of Artificial Intelligence 7

The final element in the philosophical picture of the mind is the connection between knowledge and action. This question is vital to AI because intelligence requires action as well as reasoning. Moreover, only by understanding how actions are justified can we understand how to build an agent whose actions are justifiable (or rational). Aristotle argued (in De Motu Animalium) that actions are justified by a logical connection between goals and knowledge of the action’s outcome (the last part of this extract also appears on the front cover of this book, in the original Greek):

But how does it happen that thinking is sometimes accompanied by action and sometimes not, sometimes by motion, and sometimes not? It looks as if almost the same thing happens as in the case of reasoning and making inferences about unchanging objects. But in that case the end is a speculative proposition . . . whereas here the conclusion which results from the two premises is an action. . . . I need covering; a cloak is a covering. I need a cloak. What I need, I have to make; I need a cloak. I have to make a cloak. And the conclusion, the “I have to make a cloak,” is an action.

In the Nicomachean Ethics (Book III. 3, 1112b), Aristotle further elaborates on this topic, suggesting an algorithm:

We deliberate not about ends, but about means. For a doctor does not deliberate whether he shall heal, nor an orator whether he shall persuade, . . . They assume the end and consider how and by what means it is attained, and if it seems easily and best produced thereby; while if it is achieved by one means only they consider how it will be achieved by this and by what means this will be achieved, till they come to the first cause, . . . and what is last in the order of analysis seems to be first in the order of becoming. And if we come on an impossibility, we give up the search, e.g., if we need money and this cannot be got; but if a thing appears possible we try to do it.

Aristotle’s algorithm was implemented 2300 years later by Newell and Simon in their GPS program. We would now call it a regression planning system (see Chapter 10).

Goal-based analysis is useful, but does not say what to do when several actions will achieve the goal or when no action will achieve it completely. Antoine Arnauld (1612–1694) correctly described a quantitative formula for deciding what action to take in cases like this (see Chapter 16). John Stuart Mill’s (1806–1873) book Utilitarianism (Mill, 1863) promoted the idea of rational decision criteria in all spheres of human activity. The more formal theory of decisions is discussed in the following section.

1.2.2 Mathematics

• What are the formal rules to draw valid conclusions?

• What can be computed?

• How do we reason with uncertain information?

Philosophers staked out some of the fundamental ideas of AI, but the leap to a formal science required a level of mathematical formalization in three fundamental areas: logic, computa- tion, and probability.

The idea of formal logic can be traced back to the philosophers of ancient Greece, but its mathematical development really began with the work of George Boole (1815–1864), who

8 Chapter 1. Introduction

worked out the details of propositional, or Boolean, logic (Boole, 1847). In 1879, Gottlob Frege (1848–1925) extended Boole’s logic to include objects and relations, creating the first- order logic that is used today.4 Alfred Tarski (1902–1983) introduced a theory of reference that shows how to relate the objects in a logic to objects in the real world.

The next step was to determine the limits of what could be done with logic and com- putation. The first nontrivial algorithm is thought to be Euclid’s algorithm for computingALGORITHM greatest common divisors. The word algorithm (and the idea of studying them) comes from al-Khowarazmi, a Persian mathematician of the 9th century, whose writings also introduced Arabic numerals and algebra to Europe. Boole and others discussed algorithms for logical deduction, and, by the late 19th century, efforts were under way to formalize general mathe- matical reasoning as logical deduction. In 1930, Kurt Gödel (1906–1978) showed that there exists an effective procedure to prove any true statement in the first-order logic of Frege and Russell, but that first-order logic could not capture the principle of mathematical induction needed to characterize the natural numbers. In 1931, Gödel showed that limits on deduc- tion do exist. His incompleteness theorem showed that in any formal theory as strong asINCOMPLETENESSTHEOREM Peano arithmetic (the elementary theory of natural numbers), there are true statements that are undecidable in the sense that they have no proof within the theory.

This fundamental result can also be interpreted as showing that some functions on the integers cannot be represented by an algorithm—that is, they cannot be computed. This motivated Alan Turing (1912–1954) to try to characterize exactly which functions are com- putable—capable of being computed. This notion is actually slightly problematic becauseCOMPUTABLE the notion of a computation or effective procedure really cannot be given a formal definition. However, the Church–Turing thesis, which states that the Turing machine (Turing, 1936) is capable of computing any computable function, is generally accepted as providing a sufficient definition. Turing also showed that there were some functions that no Turing machine can compute. For example, no machine can tell in general whether a given program will return an answer on a given input or run forever.

Although decidability and computability are important to an understanding of computa- tion, the notion of tractability has had an even greater impact. Roughly speaking, a problemTRACTABILITY is called intractable if the time required to solve instances of the problem grows exponentially with the size of the instances. The distinction between polynomial and exponential growth in complexity was first emphasized in the mid-1960s (Cobham, 1964; Edmonds, 1965). It is important because exponential growth means that even moderately large instances cannot be solved in any reasonable time. Therefore, one should strive to divide the overall problem of generating intelligent behavior into tractable subproblems rather than intractable ones.

How can one recognize an intractable problem? The theory of NP-completeness, pio-NP-COMPLETENESS neered by Steven Cook (1971) and Richard Karp (1972), provides a method. Cook and Karp showed the existence of large classes of canonical combinatorial search and reasoning prob- lems that are NP-complete. Any problem class to which the class of NP-complete problems can be reduced is likely to be intractable. (Although it has not been proved that NP-complete

4 Frege’s proposed notation for first-order logic—an arcane combination of textual and geometric features— never became popular.

Section 1.2. The Foundations of Artificial Intelligence 9

problems are necessarily intractable, most theoreticians believe it.) These results contrast with the optimism with which the popular press greeted the first computers—“Electronic Super-Brains” that were “Faster than Einstein!” Despite the increasing speed of computers, careful use of resources will characterize intelligent systems. Put crudely, the world is an extremely large problem instance! Work in AI has helped explain why some instances of NP-complete problems are hard, yet others are easy (Cheeseman et al., 1991).

Besides logic and computation, the third great contribution of mathematics to AI is the theory of probability. The Italian Gerolamo Cardano (1501–1576) first framed the idea ofPROBABILITY probability, describing it in terms of the possible outcomes of gambling events. In 1654, Blaise Pascal (1623–1662), in a letter to Pierre Fermat (1601–1665), showed how to pre- dict the future of an unfinished gambling game and assign average payoffs to the gamblers. Probability quickly became an invaluable part of all the quantitative sciences, helping to deal with uncertain measurements and incomplete theories. James Bernoulli (1654–1705), Pierre Laplace (1749–1827), and others advanced the theory and introduced new statistical meth- ods. Thomas Bayes (1702–1761), who appears on the front cover of this book, proposed a rule for updating probabilities in the light of new evidence. Bayes’ rule underlies most modern approaches to uncertain reasoning in AI systems.

1.2.3 Economics

• How should we make decisions so as to maximize payoff?

• How should we do this when others may not go along?

• How should we do this when the payoff may be far in the future?

The science of economics got its start in 1776, when Scottish philosopher Adam Smith (1723–1790) published An Inquiry into the Nature and Causes of the Wealth of Nations. While the ancient Greeks and others had made contributions to economic thought, Smith was the first to treat it as a science, using the idea that economies can be thought of as consist- ing of individual agents maximizing their own economic well-being. Most people think of economics as being about money, but economists will say that they are really studying how people make choices that lead to preferred outcomes. When McDonald’s offers a hamburger for a dollar, they are asserting that they would prefer the dollar and hoping that customers will prefer the hamburger. The mathematical treatment of “preferred outcomes” or utility wasUTILITY first formalized by Léon Walras (pronounced “Valrasse”) (1834-1910) and was improved by Frank Ramsey (1931) and later by John von Neumann and Oskar Morgenstern in their book The Theory of Games and Economic Behavior (1944).

Decision theory, which combines probability theory with utility theory, provides a for-DECISION THEORY mal and complete framework for decisions (economic or otherwise) made under uncertainty— that is, in cases where probabilistic descriptions appropriately capture the decision maker’s environment. This is suitable for “large” economies where each agent need pay no attention to the actions of other agents as individuals. For “small” economies, the situation is much more like a game: the actions of one player can significantly affect the utility of another (either positively or negatively). Von Neumann and Morgenstern’s development of game theory (see also Luce and Raiffa, 1957) included the surprising result that, for some games,GAME THEORY

10 Chapter 1. Introduction

a rational agent should adopt policies that are (or least appear to be) randomized. Unlike de- cision theory, game theory does not offer an unambiguous prescription for selecting actions.

For the most part, economists did not address the third question listed above, namely, how to make rational decisions when payoffs from actions are not immediate but instead re- sult from several actions taken in sequence. This topic was pursued in the field of operations research, which emerged in World War II from efforts in Britain to optimize radar installa-OPERATIONSRESEARCH tions, and later found civilian applications in complex management decisions. The work of Richard Bellman (1957) formalized a class of sequential decision problems called Markov decision processes, which we study in Chapters 17 and 21.

Work in economics and operations research has contributed much to our notion of ra- tional agents, yet for many years AI research developed along entirely separate paths. One reason was the apparent complexity of making rational decisions. The pioneering AI re- searcher Herbert Simon (1916–2001) won the Nobel Prize in economics in 1978 for his early work showing that models based on satisficing—making decisions that are “good enough,”SATISFICING rather than laboriously calculating an optimal decision—gave a better description of actual human behavior (Simon, 1947). Since the 1990s, there has been a resurgence of interest in decision-theoretic techniques for agent systems (Wellman, 1995).

1.2.4 Neuroscience

• How do brains process information?

Neuroscience is the study of the nervous system, particularly the brain. Although the exactNEUROSCIENCE way in which the brain enables thought is one of the great mysteries of science, the fact that it does enable thought has been appreciated for thousands of years because of the evidence that strong blows to the head can lead to mental incapacitation. It has also long been known that human brains are somehow different; in about 335 B.C. Aristotle wrote, “Of all the animals, man has the largest brain in proportion to his size.”5 Still, it was not until the middle of the 18th century that the brain was widely recognized as the seat of consciousness. Before then, candidate locations included the heart and the spleen.

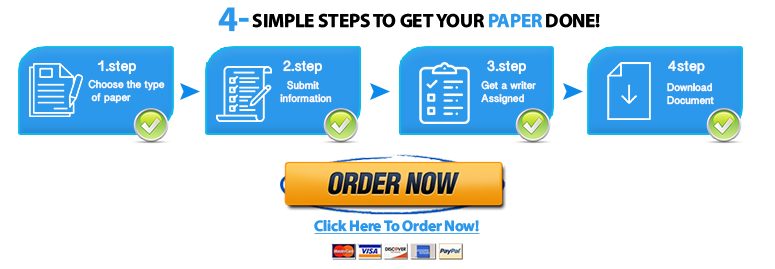

Paul Broca’s (1824–1880) study of aphasia (speech deficit) in brain-damaged patients in 1861 demonstrated the existence of localized areas of the brain responsible for specific cognitive functions. In particular, he showed that speech production was localized to the portion of the left hemisphere now called Broca’s area.6 By that time, it was known that the brain consisted of nerve cells, or neurons, but it was not until 1873 that Camillo GolgiNEURON (1843–1926) developed a staining technique allowing the observation of individual neurons in the brain (see Figure 1.2). This technique was used by Santiago Ramon y Cajal (1852– 1934) in his pioneering studies of the brain’s neuronal structures.7 Nicolas Rashevsky (1936, 1938) was the first to apply mathematical models to the study of the nervous sytem.

5 Since then, it has been discovered that the tree shrew (Scandentia) has a higher ratio of brain to body mass. 6 Many cite Alexander Hood (1824) as a possible prior source. 7 Golgi persisted in his belief that the brain’s functions were carried out primarily in a continuous medium in which neurons were embedded, whereas Cajal propounded the “neuronal doctrine.” The two shared the Nobel prize in 1906 but gave mutually antagonistic acceptance speeches.

Section 1.2. The Foundations of Artificial Intelligence 11

Axon

Cell body or Soma

Nucleus

Dendrite

Synapses

Axonal arborization

Axon from another cell

Synapse